Overview

Product video

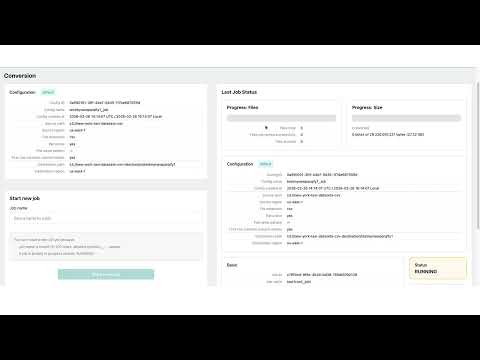

Parqify is a customer-managed AWS data conversion solution delivered as an AWS Marketplace AMI for organizations that need reliable CSV to Parquet and JSON to Parquet conversion in Amazon S3. Parqify runs entirely in the customer's AWS account on Linux and provides a web UI and API to create, monitor, and manage conversion jobs. It is designed for customers who want full control of data, IAM, storage, and networking without using an external SaaS control plane.

Parqify is built for common data lake preparation and AWS data engineering workflows where growing datasets increase storage usage, transfer time, read time, and query cost. CSV and JSON are flexible text formats, but they often become slower and more expensive to query as volume grows because engines must read and parse more data. Parqify converts CSV and JSON data stored in Amazon S3 into compressed Apache Parquet in S3, helping organizations standardize raw files into analytics-ready datasets. As a Parquet conversion tool and Apache Parquet converter, Parqify can reduce dataset size and improve read efficiency. Actual compression ratios and cost savings depend on data characteristics and query patterns.

Parqify is also designed to improve analytics performance in query engines and processing frameworks that support Parquet. Apache Parquet uses columnar storage, which can improve efficiency compared with text-based formats for many analytics workloads. In engines that support these optimizations, Parquet enables predicate pushdown and column pruning, which may significantly reduce scanned data and improve query performance. This makes Parqify a practical Parquet optimization solution for organizations using Amazon Athena, Amazon Redshift, and Apache Spark, especially when the goal is efficient CSV to Parquet and JSON to Parquet conversion with source and destination in Amazon S3.

Operational reliability and data quality are also a core focus. CSV and JSON structures may drift over time, manual schema maintenance can be brittle, and field mismatches can affect downstream jobs. Parqify supports schema inference from sampled input and can reuse cached schemas for matching file structures, reducing manual schema work and helping produce consistent, analytics-ready Parquet outputs. This helps organizations reduce errors in batch file conversion and improve repeatability for ongoing data ingestion and data lake preparation workflows.

Parqify is a purpose-built ETL alternative for organizations that do not need a full ETL platform for straightforward format conversion. Instead of introducing a larger pipeline orchestration stack for a single task, organizations can use Parqify as a focused customer-managed data conversion platform for reliable file processing. The platform emphasizes format conversion, operational visibility, and repeatable execution rather than full ETL pipeline orchestration, making it a strong fit for businesses that want lower operational overhead and faster time to value.

Key capabilities include a web UI and API for job creation and job management, parallel processing for higher-throughput batch file conversion, schema inference and cached schema reuse, job tracking, status tracking, job history, and downloadable logs. Parqify supports deployment in public or private subnets, including private-only VPC endpoints, and is suitable for customers that need private networking options in AWS. Secure first-time activation is supported with a one-time activation code, followed by administrator password setup. All processing remains in the customer's AWS environment.

Parqify is a strong fit for organizations looking for a customer-managed data conversion solution, a Parquet conversion tool, an Apache Parquet converter, a data lake preparation tool, or an AWS data engineering tool for analytics workflows built on Amazon Athena, Amazon Redshift, and Apache Spark.

Highlights

- Customer-managed AWS Marketplace AMI for reliable CSV to Parquet and JSON to Parquet conversion in Amazon S3. Parqify runs entirely in your AWS account on Linux and provides a web UI and API to create, monitor, and manage conversion jobs without an external SaaS control plane.

- Convert CSV and JSON data in Amazon S3 into compressed Apache Parquet to support lower storage usage and better read efficiency. In engines that support it, Parquet enables columnar storage benefits such as predicate pushdown and column pruning, which may reduce scanned data and improve query performance.

- Built for production data lake preparation with schema inference, cached schema reuse, parallel processing, job tracking, status tracking, and downloadable logs. Supports deployment in public or private subnets, including private-only VPC endpoints, while keeping data, IAM, storage, and networking under customer control.

Details

Introducing multi-product solutions

You can now purchase comprehensive solutions tailored to use cases and industries.

Features and programs

Financing for AWS Marketplace purchases

Pricing

Dimension | Cost/hour |

|---|---|

c6in.2xlarge Recommended | $0.45 |

c6in.4xlarge | $0.80 |

c6in.xlarge | $0.25 |

c6in.8xlarge | $1.50 |

Vendor refund policy

There is no refund policy, but you can try Parqify™ for free to see if it meets all your needs.

How can we make this page better?

Legal

Vendor terms and conditions

Content disclaimer

Delivery details

64-bit (x86) Amazon Machine Image (AMI)

Amazon Machine Image (AMI)

An AMI is a virtual image that provides the information required to launch an instance. Amazon EC2 (Elastic Compute Cloud) instances are virtual servers on which you can run your applications and workloads, offering varying combinations of CPU, memory, storage, and networking resources. You can launch as many instances from as many different AMIs as you need.

Version release notes

Parqify for AWS Marketplace : Release Notes (v1.0.0 Initial Release)

This is the first AWS Marketplace release of Parqify. Parqify provides a web based interface and backend service to run data conversion workflows (including CSV/JSON to Parquet) on your AWS infrastructure.

Additional details

Usage instructions

Subscribe to Parqify in AWS Marketplace and choose the AMI delivery option. Launch an EC2 instance from the Parqify AMI in your target AWS Region. Select an instance type supported by this product: c6in.xlarge, c6in.2xlarge, c6in.4xlarge, or c6in.8xlarge. Configure networking: Public deployment: launch in a public subnet with a public IPv4. Private deployment: launch in a private subnet and ensure private connectivity from your client network. Configure the security group: Allow HTTP 80 from your trusted client CIDR. Allow SSH 22 from your trusted admin CIDR. Avoid open access from 0.0.0.0/0 unless required for testing. Configure storage: Use a root EBS volume of at least 30 GiB (gp3 recommended). Attach an EC2 instance profile (IAM role) with required S3 permissions for your source and destination buckets. Launch the instance and wait until it reaches running and status checks pass. Retrieve the one-time activation code: In EC2 console, open the instance and go to Actions -> Monitor and troubleshoot -> Get system log. Search for: PARQIFY INITIAL ACTIVATION CODE (ONE-TIME): Open the Parqify UI: Public deployment: http://<public-ip>/ Private deployment: http://<private-ip>/ from a connected path (VPN / Direct Connect / bastion / Session Manager tunnel). On first login, enter the one-time Activation Code, then set a new password when prompted. Run a simple conversion job: Example source: s3://my-source-bucket/input/sample.csv Example destination: s3://my-destination-bucket/output/ Start the job, monitor progress in the UI, and wait for completion. Verify output files in the destination S3 bucket/prefix (Parquet output).

Troubleshooting: If you see S3 AccessDenied, verify IAM role permissions and bucket/KMS policies. If private deployment cannot reach required AWS services, verify subnet egress (NAT and/or existing VPC endpoints) per your network policy. If activation code is missed in console logs, access the instance and read parqify-bootstrap.env. For more detailed instructions, visit: https://parqify.com .

Resources

Vendor resources

Support

Vendor support

Please contact us via email: support@nademark.com

AWS infrastructure support

AWS Support is a one-on-one, fast-response support channel that is staffed 24x7x365 with experienced and technical support engineers. The service helps customers of all sizes and technical abilities to successfully utilize the products and features provided by Amazon Web Services.